By Gwen Schanker, Editorial Columnist

Last week, I discussed aggregation in my journalism ethics class. Aggregators collect journalistic information, either from an individual or group of sources, and repurpose that material in a different form. There are several different types of aggregation: Google News aggregates stories in a feed based on a logarithm, Reddit is a form of user-curated aggregation where readers control content, and The Skimm is a type aggregation where content is converted into new material as a newsletter. Taking in aggregated news rather than exploring a variety of sources is a timesaver. We approach news on a need-to-know basis, gathering information from as few paragraphs as possible.

This contrasts with the fundamental principles of journalism, which are characterized by detailed research, good reporting and well-crafted stories. When such work is aggregated, the question of attribution looms large. Should you ask permission first? Is aggregating work from another writer a form of flattery or a sign of blatant disregard for journalistic integrity?

These are the questions we grappled with in class last week, and the fact that we didn’t come to any definite conclusions indicates a larger question: is aggregation good or bad for journalism? Either side could be argued, but I believe that there is an inherent value to aggregation that works in conjunction with journalistic information.

However, aggregation’s success depends on the care taken in creation. BuzzFeed’s regular post, “The Most Powerful Photos of the Week,” is an example of valuable aggregation. The creator of that article provides a unique service to the reader; compiling those photos from their original sources makes it possible for consumers to take in a collection of high-quality information rather than searching the Internet for the what they want. Furthermore, compiling those photos may call deserved attention to the work of the photojournalists featured.

The success of this type of piece hinges on proper attribution. It’s common practice in aggregation to provide hyperlinks to the original source, whatever that source may be. However, the number of people that explore the subject beyond its aggregated form is minimal and is decreasing along with our collective attention span. It seems unfair that a less-detailed source of information should detract attention from other, more comprehensive material.

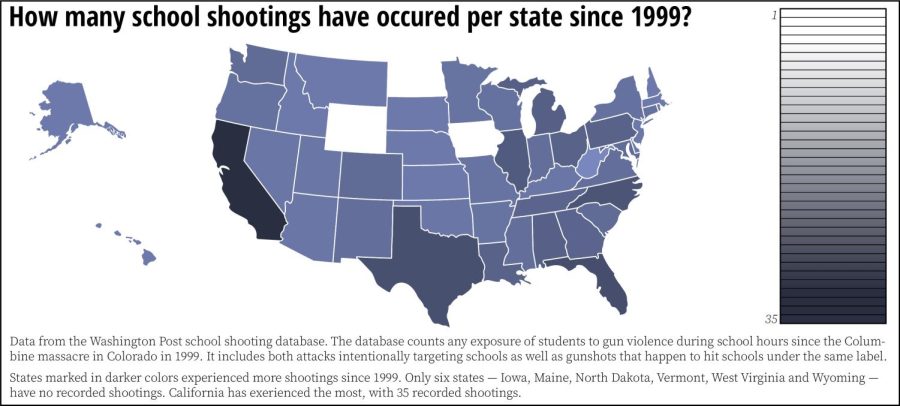

The growing field of data journalism provides another way to look at this issue. Data journalism is a form of aggregation that combines research with compilation in a unique way. Data journalists harness the power of aggregation by using visuals to make a complex story easier to digest. Though data journalism is a relatively new phenomenon, it has quickly become an integral part of news. Data is now used as either primary or supplemental material for many stories. It is particularly useful for communicating scientific information using dynamic and interactive visual tools.

Data journalism has evolved over time and will no doubt continue to do so as the field of journalism changes. Other forms of aggregation are just as likely to stick around and will develop as professional journalists grapple with the questions I addressed in my ethics class.

Aggregation has an undeniable power in today’s news environment, and its advantages should not be overlooked. Especially in the digital world, information can be compiled in incredible ways. The merit of that compilation depends on journalists’ ability to add value through their aggregated work, not to detract from the work of others.